When Kafka becomes your internal API surface

In many teams, Kafka starts as “just a bus.” A few producers publish events. A few consumers read them. Then internal tools appear: routing alerts, building backfills, reconciling metrics, enriching CRM data, triaging incidents. Without a platform team, the system often drifts into a fragile web of ad-hoc consumers, undocumented schemas, and one-off replay scripts.

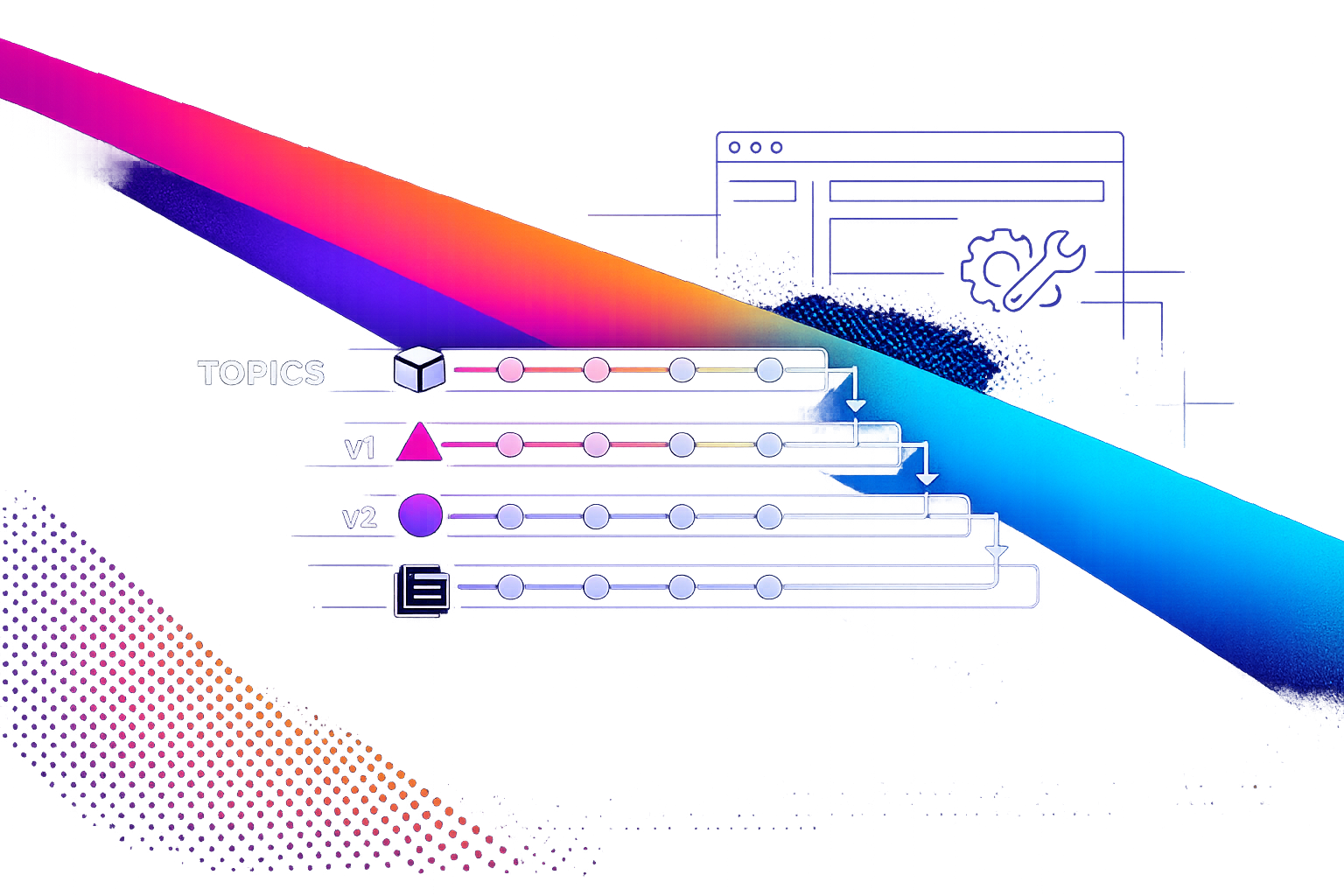

A better model is to treat Kafka topics as versioned, typed APIs. The topic name and schema become your contract. Consumers become clients. Replays become a first-class operation with guardrails. Observability becomes end-to-end, not “best effort.”

Define the contract: topics as public interfaces

If a topic is an API, it needs the same properties you’d demand from an HTTP interface:

- Stable naming that signals domain boundaries and ownership.

- Typed schemas with explicit evolution rules.

- Versioning that is visible and enforceable.

- Documentation that answers “what is this event for?” and “how do I use it?”

Start by declaring a small set of “contract topics.” These are allowed to be consumed by many internal tools. Everything else is private to a service until it meets the bar.

Topic naming that scales

Keep names boring and searchable. A practical convention is:

{domain}.{entity}.{event}.v{N}for facts that matter across teams{domain}.{system}.internal.{purpose}for private streams

The key is that the name carries intent and version. That makes it safe to build internal tools on top without guessing.

Typed schemas and evolution rules

Use a schema registry and enforce compatibility. Avro, Protobuf, and JSON Schema can all work. What matters is that changes follow rules consumers can rely on:

- Additive changes are allowed (new optional fields).

- Breaking changes require a new versioned topic (or a hard version bump with clear migration).

- Fields have documented semantics, not just names.

Without this, “event-driven tools” quickly become “event-driven surprises.”

Turn events into replayable backfills without heroics

Internal tools often need replay: you shipped a bug, you updated scoring logic, you added a new downstream destination, or you need to rebuild a derived table. The mistake is treating replay as a one-off script run by the one person who remembers how offsets work.

Design replay as a product feature of your event system.

Backfill patterns that work in practice

- Consumer-group replay: reset offsets for a dedicated backfill group and reprocess the topic. This is clean, but you must isolate side effects.

- Forked pipeline: read from the source topic, write transformed output to a new “rebuild” topic, then swap downstream consumers.

- Idempotent sink rebuild: re-run into an idempotent destination (upserts keyed by stable IDs) so duplicates are safe.

Pick one default per data shape. For example, entity change events with stable keys pair well with idempotent sinks. Append-only analytics events often need forked pipelines to avoid mixing old and new semantics.

Guardrails: make replays safe by default

Replays become dangerous when the same stream drives alerts, billing, emails, and state mutations. Add guardrails:

- Side-effect isolation: separate “compute” consumers from “act” consumers. Computation can replay; side effects require explicit enablement.

- Replay headers: mark replayed messages (e.g.,

x-replay-id,x-replay-start) so sinks can branch logic. - Rate limits: control throughput to protect downstream systems.

- Dry-run modes: compute outputs and compare without writing.

This is the difference between “we can backfill” and “we can backfill on a Tuesday without waking everyone up.”

Versioned APIs in an event world

Versioning in Kafka is often skipped because it feels heavy. The irony is that avoiding versioning usually creates more work: consumers break silently, and internal tools become frozen in time.

Two pragmatic approaches

- Versioned topics:

...v1,...v2. Clean break, explicit migration, easy rollback. - Schema evolution within one topic: works when compatibility is enforced and semantics don’t shift under the same event name.

Use versioned topics when semantics change (meaning of fields, event meaning, ordering assumptions). Use evolution when you only add optional fields or widen types safely.

End-to-end observability across producers, Kafka, and tools

Event-driven internal tools fail in ways that HTTP services don’t: consumers lag, partitions skew, offsets drift, schema changes land, or a backfill floods a sink. Observability must cover the whole path.

What to measure and why

- Producer health: publish rate, error rate, retries, message size distribution.

- Topic health: partition balance, retention, under-replicated partitions.

- Consumer health: lag by partition, processing time, DLQ rate, commit frequency.

- Business-level signals: event counts per tenant, drop/duplicate detection, “expected vs observed” checks.

For internal tools, the most important signal is often “did the derived output happen?” not “did the consumer run.” Tie output checks to each pipeline: rows written, tasks created, alerts emitted, states updated.

Tracing and correlation IDs

Make correlation IDs mandatory. Propagate a stable identifier from the producer through Kafka to every internal tool. Then you can answer basic questions quickly:

- Which input event produced this alert?

- Why did the enrichment step skip this entity?

- Is this a replay artifact or real-time traffic?

When you export traces and logs to OpenTelemetry, you can unify Kafka processing with service calls and database writes under one investigation workflow.

Shipping internal tools without a platform team

The core constraint isn’t Kafka. It’s coordination. Without a platform team, you need repeatable building blocks: scaffolds, templates, and conventions that reduce decision load.

A lightweight operating model

- Topic ownership: every contract topic has an owning team and a changelog.

- Consumer registration: list consumers and their purpose; treat it like dependency management.

- Schema PR process: schema changes require review and compatibility checks.

- Backfill runbooks: standardized replay steps and rollback procedures.

This pairs well with a single intake path for new tooling requests so event-driven work doesn’t become a second backlog. If your team is struggling with ad-hoc pings and tickets, align on an issue intake contract for a single prioritized backlog before adding more pipelines.

Where a code-first internal platform helps

You can implement everything above with bespoke services, but that’s exactly what creates accidental platform engineering. A code-first system that standardizes execution, scheduling, secrets, deployments, and logs reduces the “glue work” around event-driven tools.

windmill.dev is a practical fit when you want real code (Python/TypeScript/Go/SQL and more) for Kafka consumers, backfill jobs, and enrichment workflows, while keeping deployment and observability consistent across internal tools. In practice, that means:

- Shared templates for consumers and replayers.

- Workflow DAGs for multi-step processing (enrich → validate → write → notify).

- Central logs, alerts, and exports to OpenTelemetry/Prometheus.

- RBAC, secrets, and audit trails for sensitive pipelines.

The goal is not “a new platform.” It’s fewer bespoke snowflakes, so your Kafka topics can behave like stable, typed APIs that internal tools can safely depend on.

A practical first implementation plan

- Week 1: pick 1–2 contract topics. Add schemas, owners, and naming conventions.

- Week 2: add consumer templates with correlation IDs, structured logs, and DLQ handling.

- Week 3: implement one replayable backfill path with side-effect isolation and rate limits.

- Week 4: ship dashboards for lag, throughput, and output-level correctness checks.

After that, the pattern repeats: each new internal tool becomes “another client of a typed API,” not another fragile integration.